Saving costs in the cloud with smarter caching - Part 1

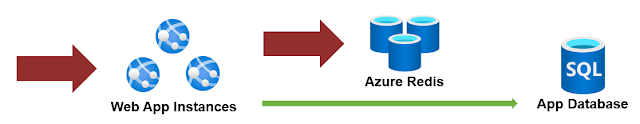

A cache is a component where data is stored so that future requests can be served faster. So for example, in the context of a web application responding to multiple requests, instead of hitting your backend database or microservice every time, the application can remember the last value for a given computation or call. One particular flavor is a centralized cache system, provided by tools like Redis, Memcached, etc. A central place where we store data temporarily and all instances of our app (and even other apps) can use it. There are many uses, from storing session data in a multi-server web application to providing a performance advantage by keeping a value that is costly to calculate or obtain. A centralized cache is a lifesaver. But it tends to be overused and there are some scenarios where we could reduce its usage or completely skip it, and in doing so, save costs and improve performance. Reducing the usage of the centralized cache. Let's have an example of a web...