Avoid Multiple Cache Refreshes: The Double Check Approach

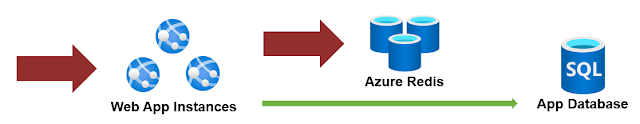

In previous articles, we've stressed the importance of caching to enhance the performance of our applications. This time, we're discussing a small yet potent tip to further amplify the benefits derived from caching. A standard caching routine often looks like this: This code is 'functional' and can be regarded as the 'default' approach to caching. Here, we're fetching a value from the cache, and if it's missing, we generate it and store it for future requests. However, a problem arises when we deal with a high-traffic application, such as a .NET Core web application or API, which must handle many concurrent requests. Suppose multiple requests reach this code simultaneously, each finding that it needs to generate the value. In such a case, you'll experience "multiple" refreshes of the same value and several calls to SetValue. To prevent this, we can employ a mutual-exclusion (mutex) lock to restrict multiple threads from accessing the sam...